The VICARIUS Platform

Virgin Islands Center for Autonomous Research (VICAR)

Virgin Islands Center for Autonomous Research Intergrated Undersea Survey (VICARIUS)

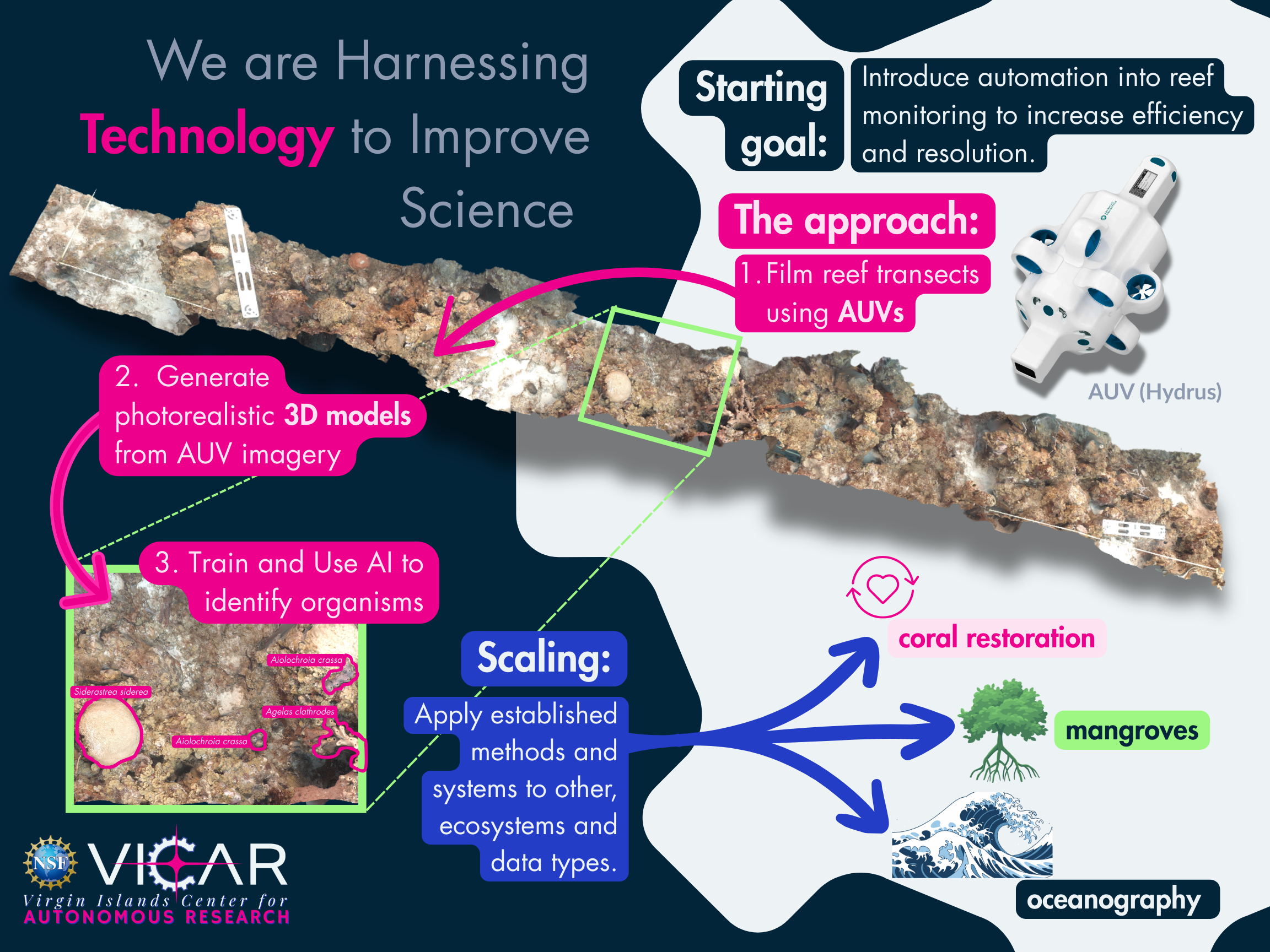

Robotics and AI are at the heart of the VICAR project, and the VICARIUS platform is our centerpiece technology. VICAR is fundamentally about bringing new technology to ecological research – augmenting the old clipboard-and-snorkel approach with autonomous robots and smart software.

Our areas of research include:

-

Robotics

VICAR uses a fleet of robotic systems to collect repeatable, high-resolution imagery across reef and coastal habitats. Underwater, autonomous underwater vehicles.

-

3D Mapping & Photogrammetry

3D mapping and photogrammetry transform overlapping images collected by AUVs and drones into detailed, photorealistic 3D models of reefs and coastal habitats.

-

AI & Deep Learning Tools

VICAR's AI classification system is designed to process large volumes of imagery from 3D models and video consistently and at scale.

ROBOTICS

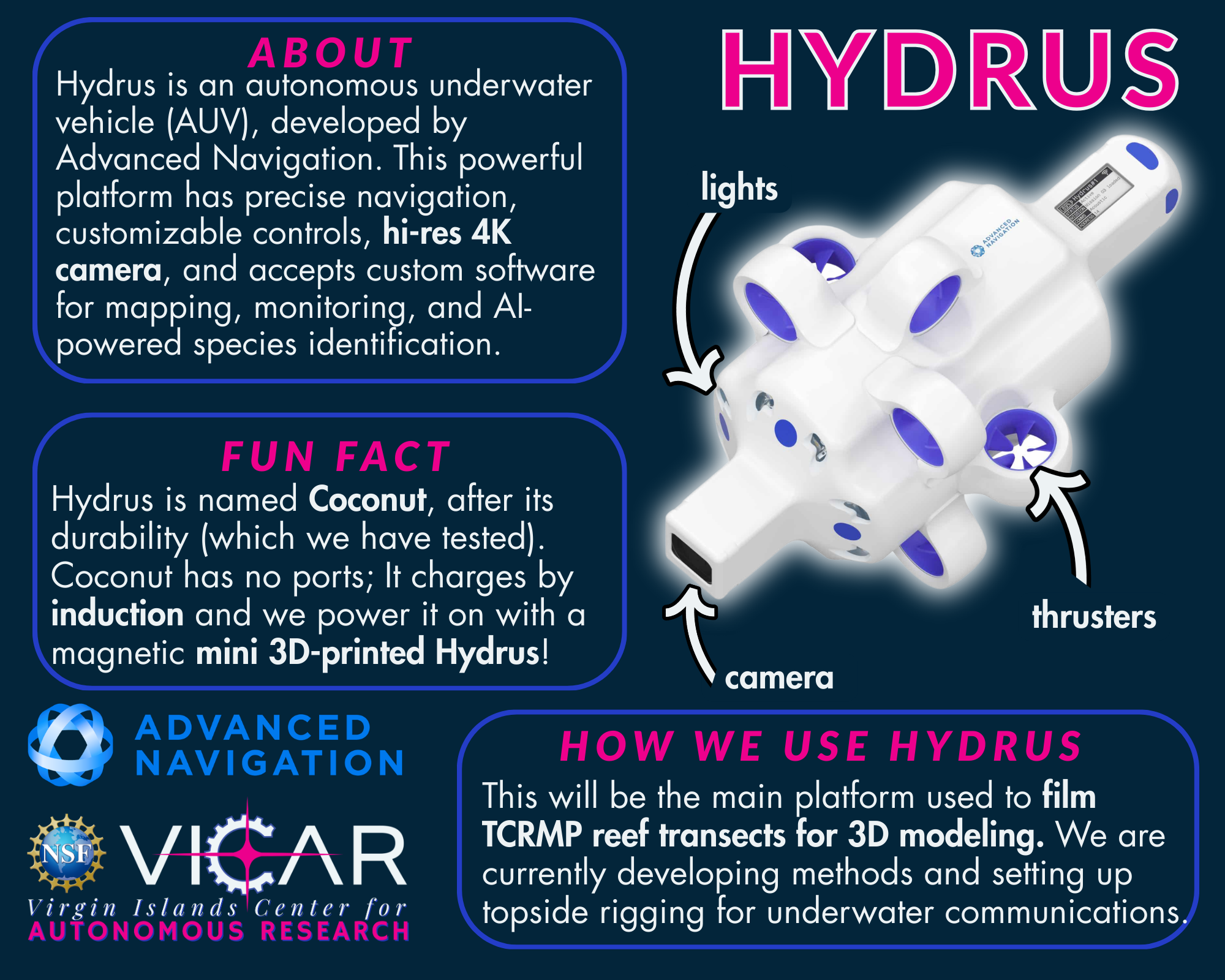

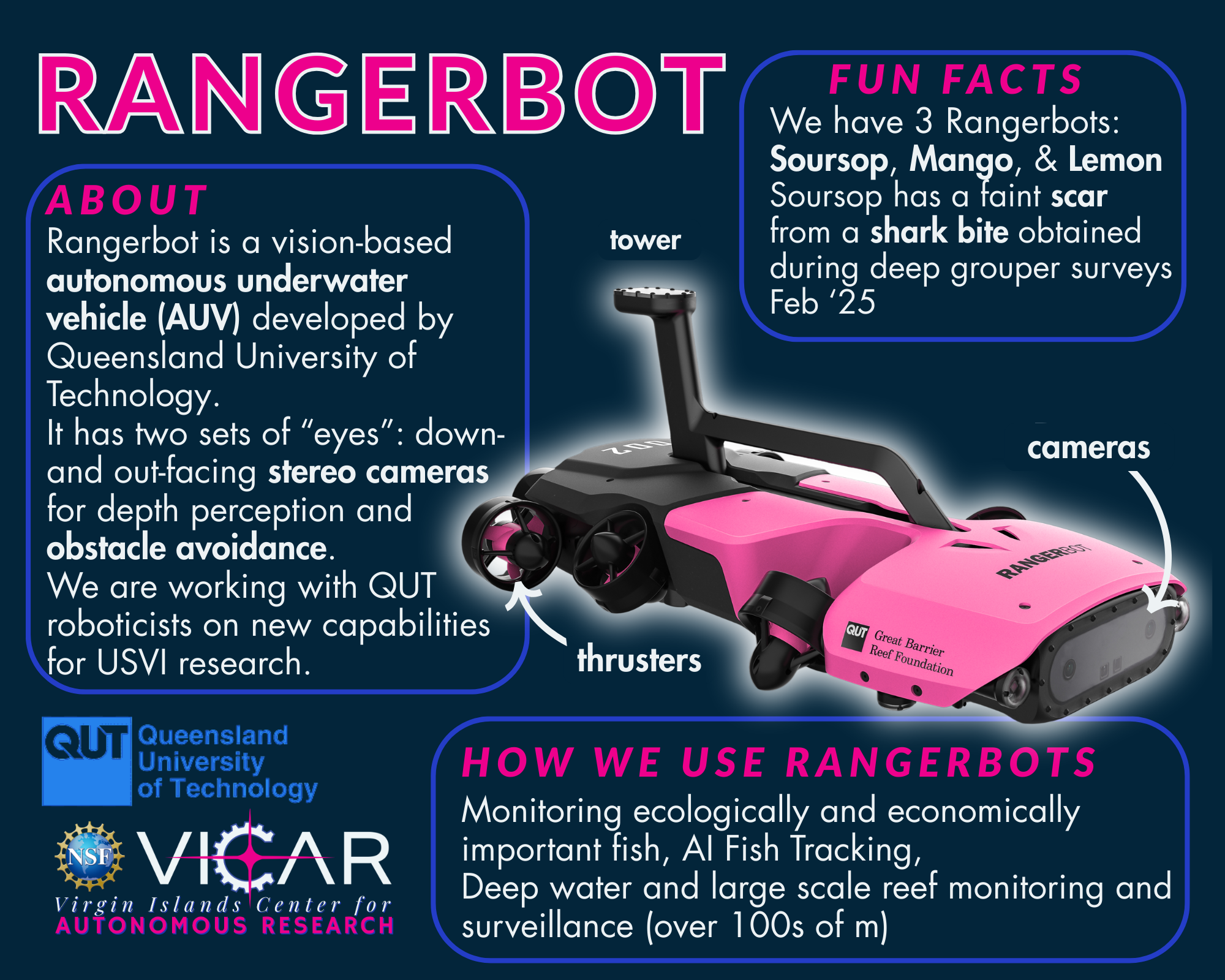

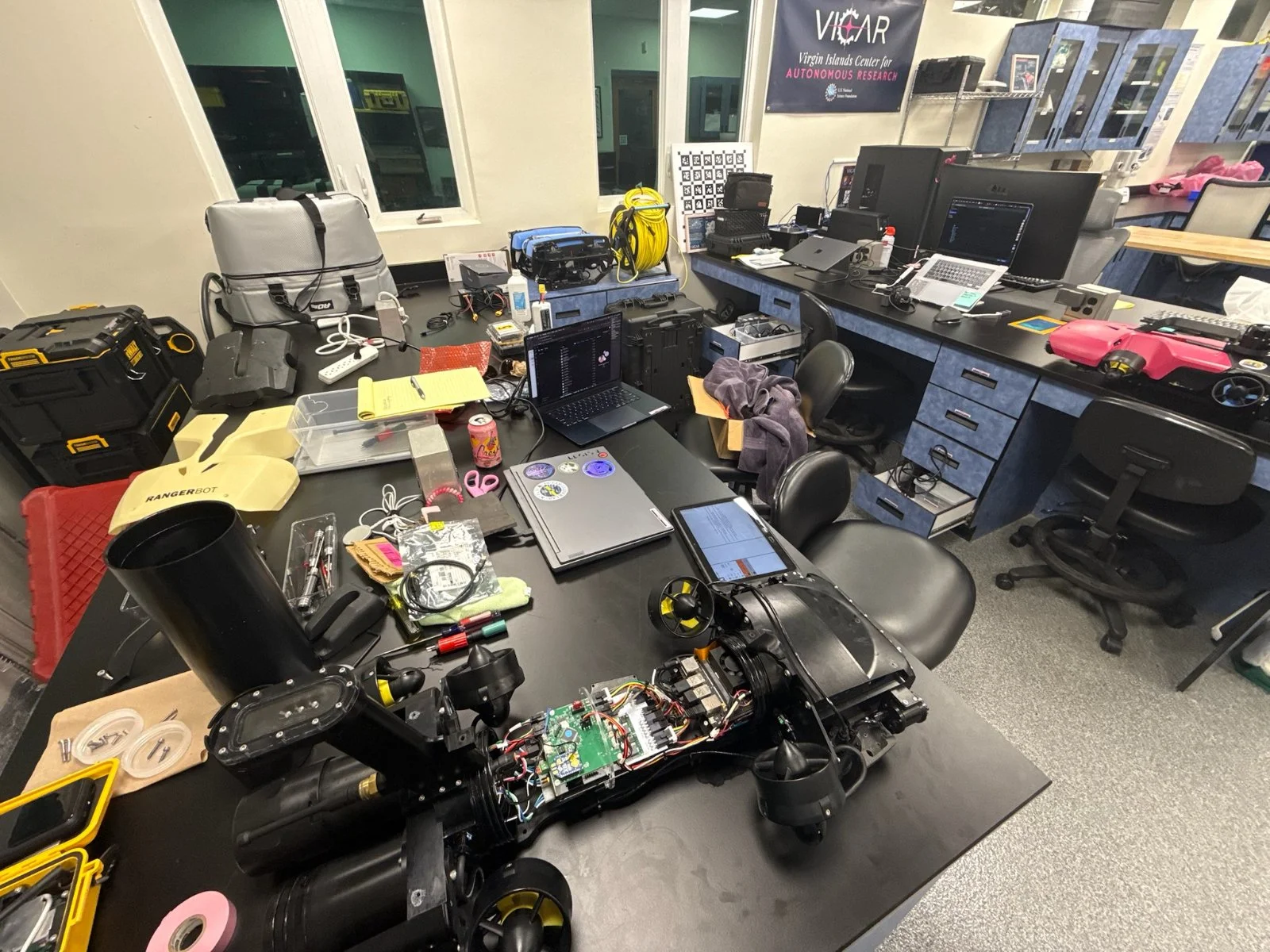

VICAR uses a fleet of robotic systems to collect repeatable, high-resolution imagery across reef and coastal habitats. Underwater, autonomous underwater vehicles (AUVs)—including the RangerBot and Hydrus microAUV—follow pre-programmed survey routes to capture dense image datasets along established monitoring transects. These systems reduce reliance on diver time, enable access to deeper and more challenging sites (including mesophotic reefs at 30–41 m depth), and produce the standardized imagery needed to build 3D reef models and train AI classifiers. For coastal habitats, we will apply aerial drones to collect high-resolution imagery over mangroves where ground access is limited. Drone surveys support 3D modeling of canopy and root structures, as well as measurements of canopy coverage and propagule survival, turning restoration efforts into trackable, quantifiable outcomes.

Together, these platforms enable consistent, scalable data collection across ecosystem types that would be impractical to survey through traditional methods alone. Students gain hands-on training in mission planning, robotics deployment, and data collection, skills that are directly transferable to careers in marine science and autonomous systems.

AUV moving through a large Nassau grouper aggregation.

3D MAPPING & PHOTOGRAMMETRY

3D mapping and photogrammetry transform overlapping images collected by AUVs and drones into detailed, photorealistic 3D models of reefs and coastal habitats. Rather than producing flat photographs, this approach generates measurable surfaces that capture the physical structure of an ecosystem. From these models, structural complexity metrics can be calculated to link physical habitat characteristics to ecological patterns and biodiversity. Models also preserve a spatially accurate visual record of a site that can be revisited for new analyses without returning to the field.

3D models support clear before-and-after comparisons following disturbances like storms, bleaching events, and hurricanes. They also provide the spatial data on which AI classifiers are trained, making 3D photogrammetry a foundational step in the VICARIUS pipeline. This approach is currently applied at TCRMP's 31 long-term monitoring sites across the USVI, where over 450 3D models have been produced to date.

AI & DEEP LEARNING TOOLS

VICAR's AI classification system is designed to process large volumes of imagery from 3D models and video consistently and at scale. Because the field of computer vision and deep learning is advancing rapidly, the team maintains an ongoing state-of-the-art review process to identify and evaluate emerging tools as they become available, rather than committing to a fixed set of architectures at the outset. This adaptive approach ensures that the classification pipeline reflects current best practices as the platform matures.

One framework under consideration is a hierarchical classification approach, in which a broad model first categorizes major benthic groups and substrate types, and specialized submodels then refine classification for specific organism groups such as coral species, sponges, octocorals, and benthic primary producers. The team is also actively testing Meta's Segment Anything Model 3 (SAM3), which enables a researcher to provide a small number of annotated image examples and automatically segment matching instances across thousands of frames—substantially reducing the annotation burden that has historically been the primary bottleneck in applying AI to ecological imagery. Additional tools under evaluation include TagLab for AI-assisted annotation of reef orthoimages and VisCore for navigating and analyzing reef photographs as 3D digital twins.

This testing and development work is supported by a purpose-built high-performance computing workstation—featuring dual NVIDIA RTX 5090 GPUs and over 30 terabytes of NVMe storage—that provides the processing capacity required for training deep learning models on large image datasets. All models and code are version-controlled using Git and housed within the VICARIUS platform, ensuring that classification workflows remain reproducible, modular, and transferable as methods continue to evolve.